Opinion: Social media companies should hire more moderators

In light of Frances Haugen’s congressional testimony, Facebook and similar companies should hire more content moderators to filter out harmful content.

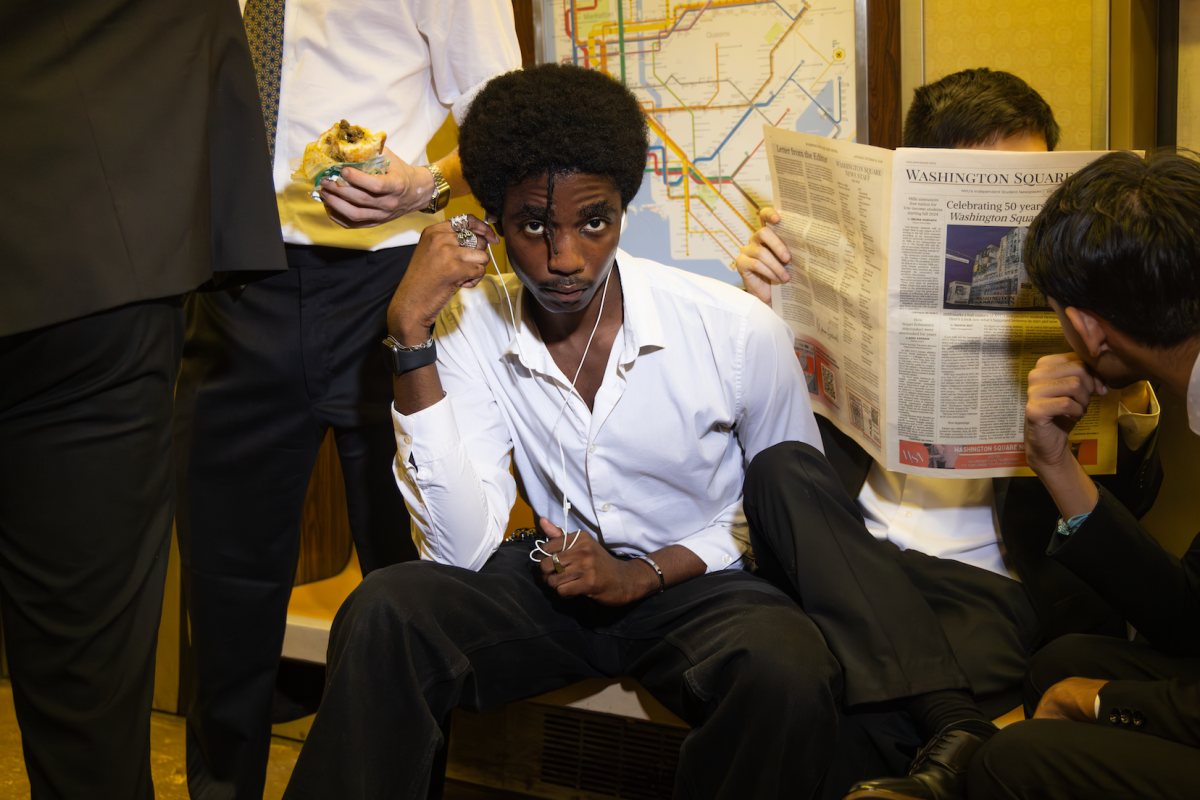

Facebook whistleblower Frances Haugen lifted the veil on the company’s harmful practices. The social media powerhouse must adopt new practices to regulate harmful content immediately. (Photo by Jorene He)

October 18, 2021

Earlier this month, Facebook whistleblower Frances Haugen spoke to Congress about the harms of Facebook, including the spread of misinformation and the damage to young adults’ mental health. Haugen revealed that, despite being acutely aware of the harm that Instagram does to young girls, Facebook has continued to push forward on its plan for a social media app for kids. As consumers, we deserve better experiences on these platforms so that we can protect our personal wellbeing.

The type of content we consume daily through social media apps can greatly affect the way we see ourselves, so it’s essential that people are not exposed to harmful graphics or images. Last year, an NYU Stern study called on Facebook to make internal improvements that would better regulate content on the platform. The researchers called for Facebook to hire more moderators in order to curtail the spread of harmful online content — and not just outsourced content moderators, who have proven less effective. In light of the recent Facebook hearings, Mark Zuckerberg and his board should follow NYU’s recommendations and implement these changes.

In my experience, my Instagram feed has made me feel less than satisfactory. I felt like I wasn’t pretty or skinny enough, which in turn made me hyper-aware of my self-image. As someone who considers herself confident, becoming self-conscious of my body and what I present to the world made me feel out of control — and I’m not the only one who has had this experience.

The Instagram algorithm recommended content relating to eating disorders to a 13-year-old girl after her account suggested an interest in weight loss. The algorithmic nature of this issue is especially concerning, as Facebook and other companies are able to profit off of consumers while feeding them harmful content. Increasing the amount of moderators on the platform will serve to curb this harmful content. While Facebook devises a longer-term solution to the Instagram algorithm’s issues, moderators can serve as a temporary stopgap against displaying harmful content to young kids.

NPR reported earlier this year that the more content an individual consumes on these apps, the more at risk they are for depression. Social media warps our perceptions of the realities of daily life. In light of Haugen’s testimony, we more clearly see the link between harmful content that Facebook algorithms recommend and the mental health of its users. The company has a moral obligation to do whatever it takes to make its products safe for users, and hiring more moderators is one way to achieve this.

Consumers deserve more transparency about where their personal data is going and information that may be circulating, especially if that information is misleading or false. With more moderators, Facebook could more reliably remove misleading or harmful content and improve users’ experiences.

It is worth noting, however, the immense amount of pressure that Facebook content moderators face. They are tasked with moderating some of the most horrific content on the internet, and are often forced to work grueling hours viewing traumatizing images. Facebook must not only hire more moderators — it must also provide them with more support.

How can we continue to believe that social media companies are acting in our best interest when, time and time again, they have failed to address internal issues that harm its users? Facebook needs to make improvements by hiring more moderators as soon as possible.

A version of this story appeared in the Oct. 18, 2021, e-print edition. Contact Caroline Thoms at [email protected].