Eleven individuals were murdered two weeks ago at the Tree of Life synagogue, but just a few days later, it feels like the world has already forgotten. Since this attack, our news feeds have been filled with headlines of new tragedies. Another day, another tragic shooting, another hate-fueled controversy. Throughout the years, there has been a steady increase in the number of hate groups present in the United States, and the internet remains an unregulated platform for individuals to freely congregate on the basis of hate. Individuals are free to post sexist, racist and anti-semitic content online with no repercussions for their actions, leading to a toxic environment that encourages violence against other groups. Sites like Twitter and Facebook need to regulate their platforms more to prevent the spread of these hateful ideas, while legal consequences should be enforced to hold individuals accountable for their posts online.

The First Amendment grants Americans the right to free speech. While this freedom allows individuals to criticize the nation and its leaders, when taken to the extreme, it can serve as a destructive force in society. Hate speech may not be illegal, but in the online realm, it is toxic and dangerous. The internet facilitates the growth of hateful ideologies because, in our echo chambers, these ideologies are perceived to be more widespread than they actually are. In other words, the bubbles that we limit ourselves to online warp our viewpoints, making us see the world through a distorted lens. While we may think our ideologies are common, they may really only common in our immediate social media bubble. These ideas gain momentum as they disseminate and people come to view them as the norm, increasing the number of followers who are willing to bring these hateful remarks to the real world. In essence, the internet can contribute directly to the normalization of hate speech.

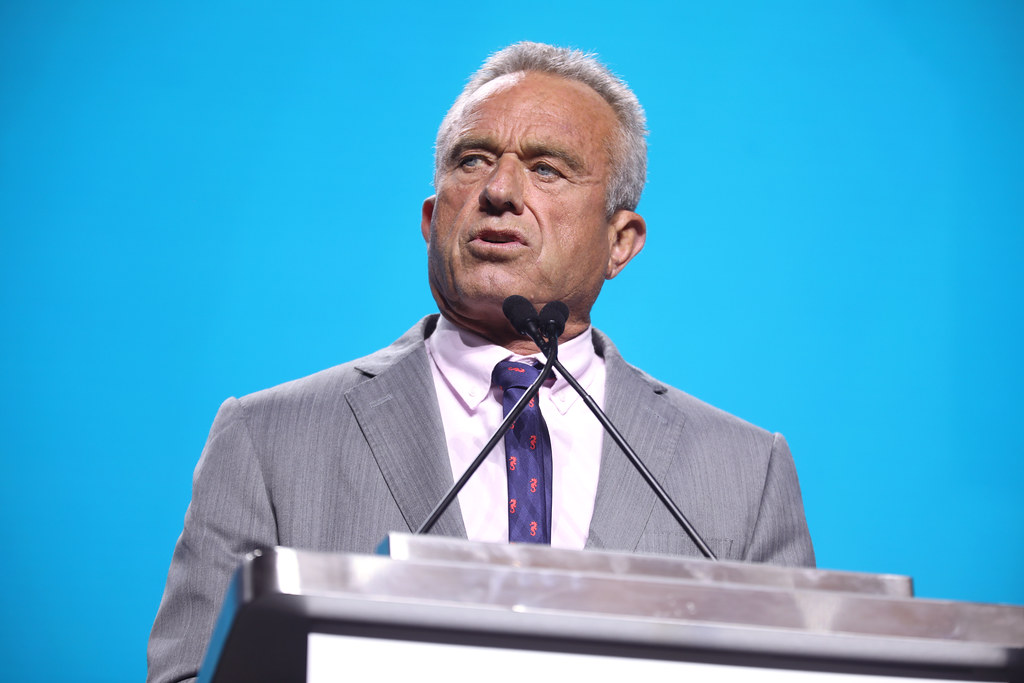

Prime examples of this exist in the cases of Cesar Sayoc, the pipe bomb suspect accused of sending explosives to Democratic figures and Trump critics, and Robert Bowers, the suspect in the Pittsburgh shooting at the Tree of Life synagogue. In both cases the individuals shared their hateful sentiments online before deciding to take violent matters into their own hands. While Cesar Sayoc’s social media content consisted mostly of real estate and sports, after 2016, he began posting hateful extremist right-wing content regarding conspiracy theories, ISIS and guns. While Twitter was notified about his online behavior, they took no action against his account until it was too late.

Similarly, Robert Bowers was known for his strong online presence on Gab, a “free speech social network,” where he shared blatantly anti-semitic content such as: “Jews are the children of Satan – John 8:44.” In his last Gab before the shooting, Bowers stated, “HIAS likes to bring invaders in that kill our people… Screw your optics, I’m going in.” While Bowers’ post was initially perceived as nothing out of the ordinary, the events that transpired on the morning of October 27th made it clear that he was in fact warning the internet of what he was about to do.

In both scenarios, there were warning signs of what would shortly unfold. Both Sayoc and Bowers spewed hateful ideas, propaganda and violent threats that went unacknowledged by social media sites. A system of online accountability could have prevented both tragedies. Unfortunately, sites like Facebook, Twitter and Gab only take action to remove hateful content after the fact, missing potential prevention of violence.

In the age of technology, keyboard warriors — people who hide behind their screens while posting aggressive content online — are the norm, and so it is easy to dismiss online actions as non-threatening. However, the cases of Sayoc and Bowers reveal the danger keyboard warriors present to our communities. Therefore, it is critical that we disarm these threats at the source and enforce consequences for their online behavior. Sites like Facebook and Twitter should be more stringent with the content they allow on their platforms and better identify warning signs.

Steps should be taken by authorities to monitor websites like Gab and 4chan, which harbor racist, bigoted and anti-semitic ideologies, more closely. Monitoring these sites and holding individuals – like Sayoc and Bowers – accountable for the threats they make online can help prevent future attacks. Of course, there are logistical difficulties with legal monitoring of websites like Gab and 4chan. As of March 2018, Gab had 394,000 users, and the number has been steadily growing. And users of social media platforms like Gab would argue against government regulation, as they adamantly publicize their “commitment to free speech.” But it is important to acknowledge that while intimidation is illegal, there are no legal consequences for the same behavior online. Many individuals feel safe behind their screens because their actions have few apparent consequences. However, if online monitoring can force individuals to understand the reality of the consequences their posts can possess, they may think twice before preaching violence online.

What do SpaceX, a commercial leader in the aerospace world, and LELO, a European sex-toy company, have in common? They’re both pioneers in the tech world and simultaneously changing our lives in more ways than one. Just Tech-ing In will discuss socially and technologically relevant topics like forthcoming innovations, tech controversies, women in STEM and university updates on Tandon projects and startups.

Serena Vanchiro is a junior studying Mechanical Engineering at Tandon School of Engineering.

Opinions expressed on the editorial pages are not necessarily those of WSN, and our publication of opinions is not an endorsement of them.

Email Serena at [email protected].