Students Create App To Translate Sign Language

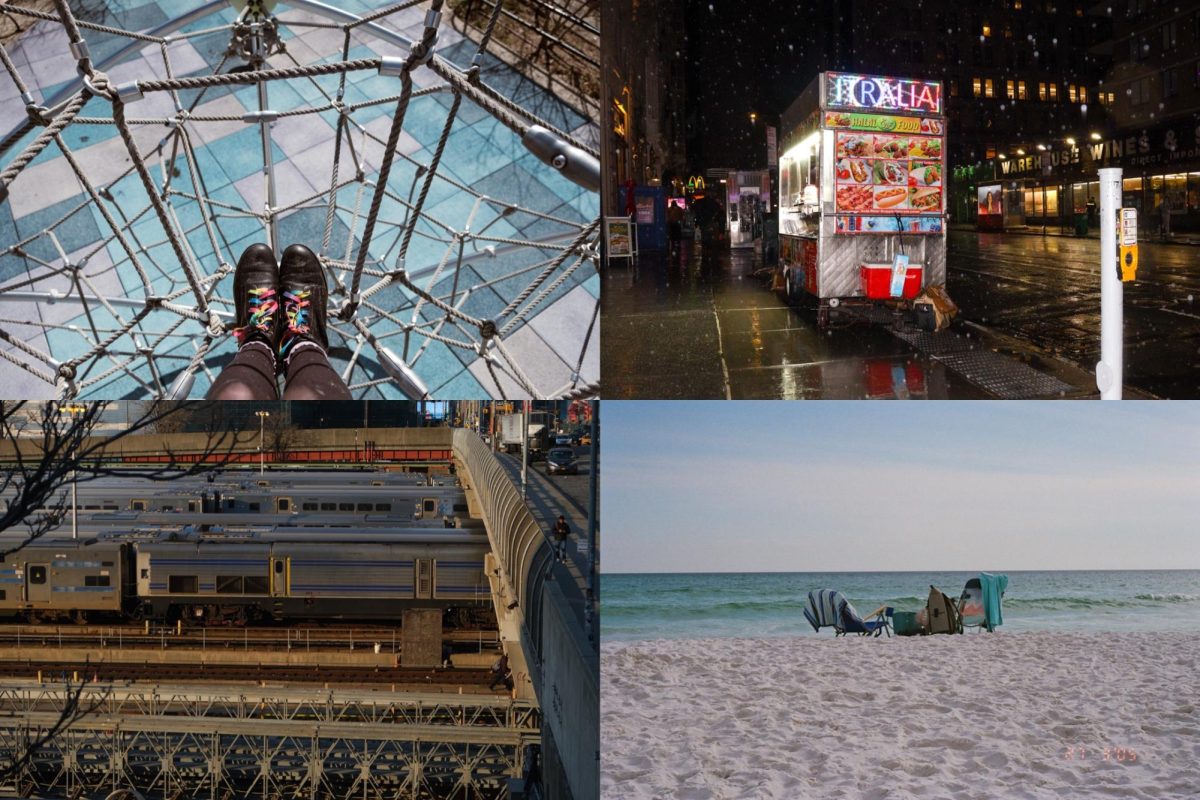

A demo of individuals utilizing the ARSL app to translate spoken word into sign language and vice versa.

April 10, 2018

Three NYU Tandon School of Engineering graduate students recently developed an application that translates sign language into readable text. The mobile app is called “Augmented Reality Sign Language” and can translate between different versions of sign language as well as between spoken language and sign.

The app allows the deaf user to sign, and then the app turns this into text and speech for the non-sign user to understand. The non-sign user can speak into the app which will then translate the spoken word into sign language and text for the deaf user.

Zhongheng Li, a masters student at Tandon, realized there was a whitespace to address when his friend’s parents, who are both deaf, moved from Hong Kong to New York City and did not know American Sign Language.

Earlier this year, Li, Jacky Chen and Mingfei Huang conducted many interviews to understand the available technology and further supplies needed. Through their interviews, they discovered the hearing impaired’s low accessibility to mobile equipment and decided to build an app to universally cater to more people.

“You have to depend on [and] rely on somebody else to say something for you, so that’s something we were trying to bring around to our users to create independence — for them to just use a phone and talk to people,” Li said.

Li said deaf users prefer the digital human simulation because sign language has a different grammar structure than written English.

“English and American Sign Language — they’re totally different. For example, in English [you’d say], ‘I want to make an appointment,’ right? But in ASL, it [translates to] ‘make appointment want,’ so it’s [a] very different order.”

Chen believes a key part of the app is its ability to translate into multiple forms of sign language. There is no universal form of sign language, so people from different regions cannot communicate using sign.

“There are so many different sign languages in the world,” Chen said. ”What we want our app to do is actually also to translate between the different sign languages.”

Additionally, Chen emphasized the importance of having someone sign on screen for deaf users so they do not have to rely on text alone. The person signing on screen is a digital overlay of a human figure, implemented to improve user experience.

“The reason we have this [overlay] is because for sign language users their first language is actually sign language,” Chen said. “So we talked to them and they prefer visually seeing sign language over text because it’s easier. It’s quicker for them to understand.”

Zhongheng Li, a masters student at Tandon, said deaf users prefer the digital human simulation because sign language has a different grammar structure than written English.

“English and American Sign Language — they’re totally different. For example, in English [you’d say], ‘I want to make an appointment,’ right? But in ASL, it [translates to] ‘make appointment want,’ so it’s [a] very different order.”

NYU Assistant Professor of Computer Science and Data Science Kyunghyun Cho shared his perspective on this development of technology and communication in an email to WSN.

“With the advances in machine translation technology along with mobile technology, it is increasingly becoming easier to translate from one language to another on-the-fly, which helps reducing the language barrier,” Cho said.

Cho pointed out the limits of new developments in technology similar to these that people rely on for communication.

“It is, however, important for users to carefully understand the limitations of such technology,” he said. “The imperfection of such technology implies that translation may convey a wrong message or some of the details are incorrectly translated or missing.”

Email Darcey Pittman at [email protected]

Ifra Saleem • Jan 12, 2022 at 5:32 am

Could you please explain that which technology and software you used for this project.

amira mohamed • Feb 22, 2021 at 5:39 pm

can you help in our asl translator project please…..?

Cathy • Aug 26, 2020 at 4:08 am

Please how do I get the app download